Working remotely is not new. However, when the COVID-19 pandemic hit the world, it became the new norm for all businesses where working in the office was not essential. The change from office to remote work changed the type of log data generated by employees and the business, and in many cases, increased it, sometimes significantly. Historical log data helps you see emerging trends during this change so that you can make the necessary adjustments to maintain performance, availability, and security for your workforce.

Most of the time, you’re using log data to see the near-current state of your environment–maybe a few seconds, minutes, or hours in the past. When working on a specific incident, you might look back a few days or set a particular range of time to search.

Keeping your log data as long as possible not only helps you solve issues (since you have access to the log files from the past several months or years), it allows you to get more value out of the data now. For example, say you are investigating the root cause of an incident in your environment. You notice that similar issues were present in the past and say to yourself, “Are these connected?” But in a data-driven world, how do you prove that your gut reaction is correct? First, search in the near past for the root of this incident. With a little luck, you will probably get a few lines that give you something to work with and investigate further.

Management will often ask when a particular issue began. Historical log data can help to answer that. Since you know the root cause of the current problem, you can now hunt in the past and see when that error has come up before. With this information, you can answer questions about when an issue began and perhaps find clues to detect it more quickly in the future.

Let’s look at another use case. The Linux kernel checks your hard drives’ SMART parameters and writes the status into a log file on the local device. However, occasionally, some checks are not performed as expected. So, you watch for common failures, and if those happen regularly, you then act to resolve the issue. With historical data, you can establish precisely when someone performed the checks and identify the instances where they did not. If the vendor asks when you last got a status update, you can answer with specific, accurate information.

The length and comprehensiveness of your event history depend on the amount of data you store. Some people don’t mind having the raw data around for months or years. Being able to search over a history of years might be a huge benefit at some point in investigating a tricky issue. But the flip side of storing that large volume of data means a commitment to storing large compressed files or keeping all that data in your log management solution.

One way to reduce that commitment is to collect only what you need and to trim any extra information before you store it. Modern log management solutions allow you to work with the incoming messages to keep only the valuable information and drop all unwanted details. A centralized log management solution allows you to analyze those logs and get value from something that most people just throw away.

Having historical data available for searching enables you to see trends over time and build future predictions. If done right, it works seamlessly and improves your trust in your environment due to the knowledge you gain from the past.

STORING LOG DATA IN GRAYLOG

How long you can store your logs with Graylog depends on your ingest rate, the log size, and the available storage. When you already have Graylog up and running, you can see your ingest rate into storage in the overview.

The amount of space taken on your disks depends on your storage configuration. The storage space varies and is hard to calculate upfront, so Graylog displays the current used storage and the configuration in one place.

You can configure how long you want to store your messages, based on index sets. You can see the configuration settings in the documentation.

Searching Historical Data in Graylog

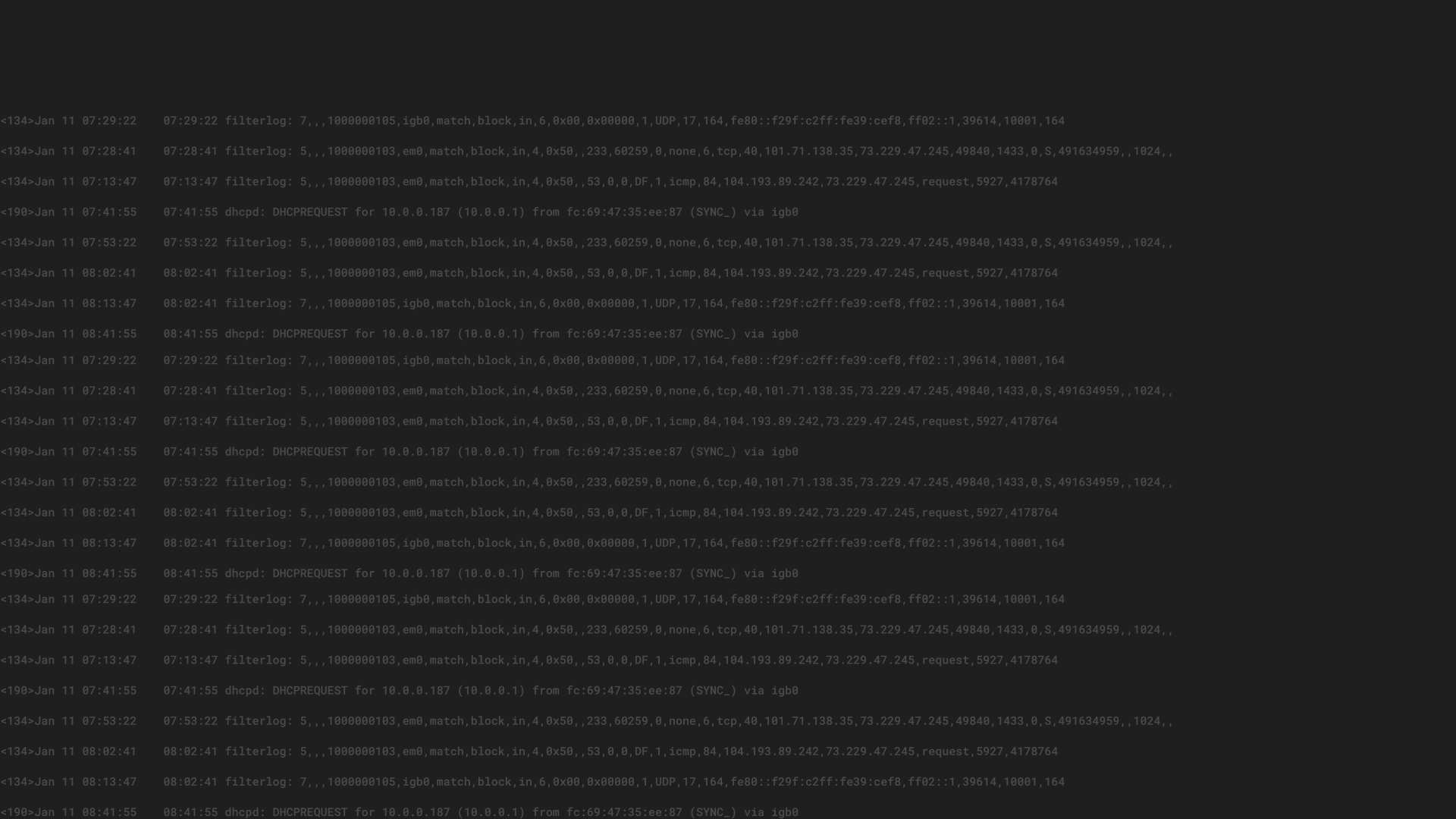

In Graylog, you have three options to select the timeframe of your search:

- Relative: from now into the past

- Absolute: a configurable time and date

- Keyword: text that conveys a timeframe, such as “last week”

The most commonly used search is the relative search. Typically, users search for data from the last X minutes/hours until now. However, when looking into historical data, the absolute search selecting specific dates is more important. You can also combine the two searches to search for the last seven days a year before and compare that to the relative search in the last seven days now. Having these two searches allows you to compare the near past with the historical data. This allows you to conduct a trend analysis over a broader period than just the last few days. You can look at specific timeframes or the entire previous year, depending on how long your system collects and keeps the log messages.

Working with historical data in Graylog is nearly the same as searching for the near real-time events of the last few minutes, making it easier and quicker to get a look into the past to inform us today. After all, as Alfred North Whitehead once said, “The only use of knowledge of the past is to equip us for the present.” Centralized log management lets you decide who can access log data without actually having access to the servers. You can also correlate data from different sources, such as the operating system, your applications, and the firewall. Another benefit is that the user does not need to log in to hundreds of devices to find out what is happening. You can also use data normalization and enhancement rules to create value for people who might not be familiar with a specific log type.