AWS is a popular destination for IaaS that offers quickly saleable resources to meet even the largest customer demands. Cloud scalability like this can generate a large amount of logs you need to monitor to keep up with your cybersecurity goals. Getting those logs into a SIEM or centralized log management platform such as Graylog is key to have proactive monitoring and alerting.

Where to get logs from Amazon AWS?

Amazon keeps logs of the environment in its CloudWatch service. This centralized point can contain logs from the servers running, network traffic flows, application logs, and metrics, to name a few. Having this location for logs allows for a quick way to get large visibility wins, through one common interface.

These logs from Amazon are written in different time intervals and some can only be put in an S3 bucket. But with the Kinesis streams, you can get this data in near real-time and have it ingested by a log solution.

Graylog has built-in connectors to AWS CloudWatch/Kinesis, allowing you only to put in a few needed credentials to start ingesting your logs. If you want to have a look, we recently wrote a post explaining how to ingest Cloudtrail logs using Graylog AWS plugin.

Why ingest AWS Logs?

Ingesting the logs from Amazon AWS will allow you correlate data you see across your entire environment rather than just the cloud. Having the ability to tie in user names on both the corporate systems and the cloud systems gives you one cohesive monitoring platform.

As cloud logs are ingested, additional meta-data can be added to enhance the logs in real-time. Taking network traffic logs of outside connections into the cloud environment and performing Threat Intelligence and Geo-Location lookups on each IP can quickly show any attacker or connection happening.

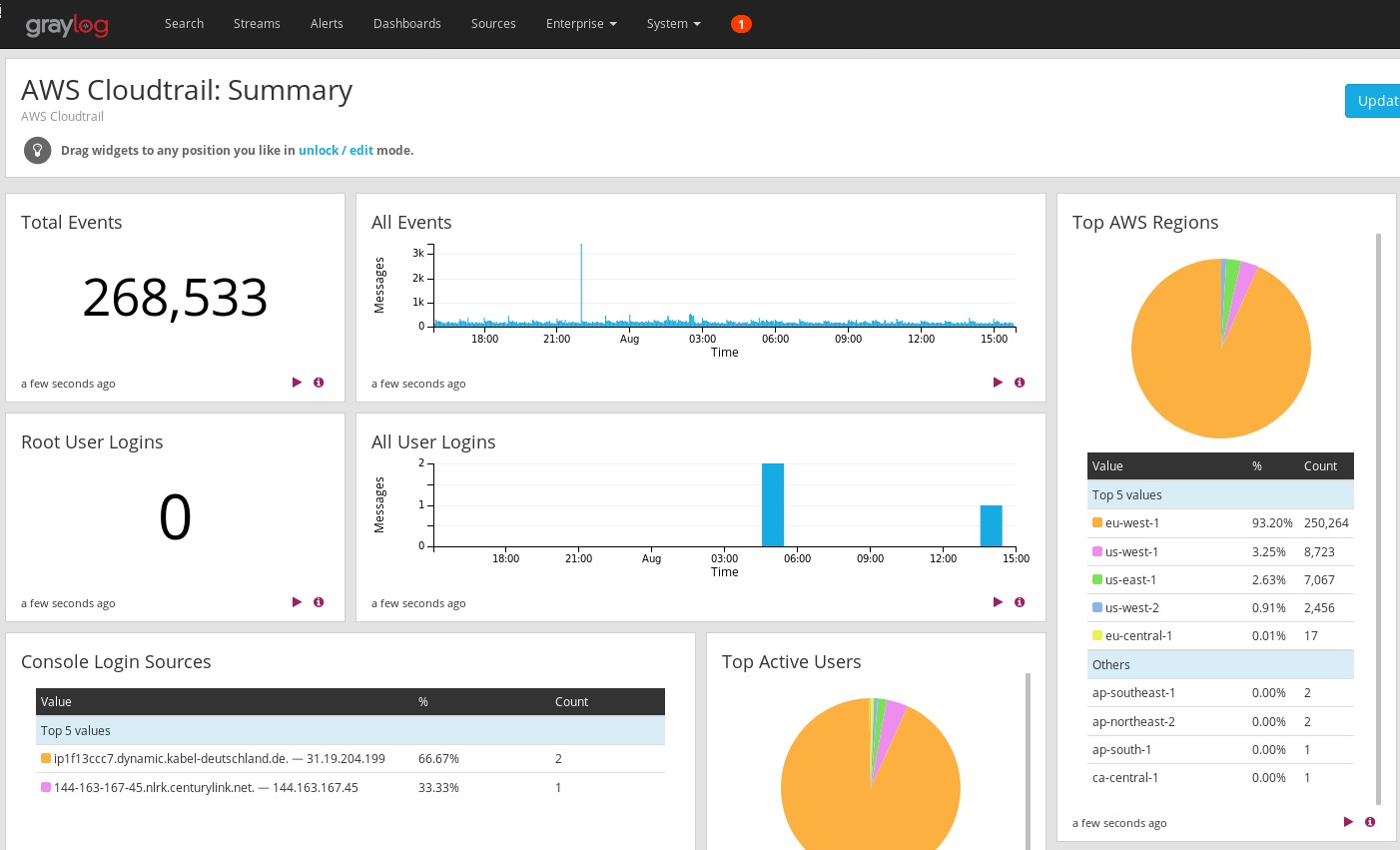

Dashboards are another analytic feature that can be applied to give a breakdown of the services in use, the ports and protocols most commonly accessed, and user authentication data – all in one place.

On top of providing an easily accessible visualization of logs, correlated events can be triggered and real-time emails sent out to administrators keeping them apprised of what is happening in the cloud.

Logs in the cloud also have a time limit to how long they are kept available for use. These settings are hard to change and can be very costly to keep them in the cloud. Information in the centralized logging architecture can be archived based on company policy, and given to only those who need the data with role-based access control.

Conclusion

With companies relying very heavily on IaaS, data collection of those environments is extremely important and valuable. Providing real-time insight on how services are performing, alerting when issues arise, and keeping a historical archive of logs is why centralized logging of AWS is essential.